Filed under: Equipment, Guest Blogger, photogrammetry, Technology, Training, Workshops

Christopher Ciccone is a photographer at the North Carolina Museum of Art, and this is a post he wrote for the museum’s blog Circa. Chris attended the 4-day photogrammetry training class taught by the CHI team at the museum in May 2018 and describes the experience here. Thank you for sharing your blog, Chris!

Photogrammetry is the science of making measurements from photographs of an object (or in aerial photogrammetry, a geographic area). This is done by taking a series of carefully plotted still photographs that incorporate targets of known size and then analyzing the images with specialized software. The resulting data can then be used to generate a variety of output products such as maps, detailed renderings, and 3-D models for use in a number of applications.

Dense point cloud rendering of sculptor William Artis’s Michael. The blue rectangles represent the position of the camera for each image that was used to create the 3-D model.

Although photogrammetry as a scientific measurement technique has existed since the nineteenth century, it has been the advent of digital photography and high-powered computational capacity that has made it a practical tool for scholars, researchers, and photographers. Because photogrammetry can be employed on objects of any size, its usefulness in the cultural heritage sector is vast. Interesting uses of photogrammetry include, for example, documentation of historic sites that might be slated for destruction or are in danger of ongoing environmental damage.

Photogrammetry at the NCMA

In May the Musem’s Photography and Conservation departments hosted instructors Carla Schroer and Mark Mudge from Cultural Heritage Imaging in San Francisco for a four-day photogrammetry training workshop. Participants included myself, NCMA Head Photographer Karen Malinofski, and NCMA objects conservator Corey Riley, as well as colleagues from the National Park Service and the University of Virginia.

Workshop participants Cari Goetcheus and Gregory Luna Golya photograph Willam Artis’s Michael on a turntable to facilitate views from all angles of the object.

William Ellisworth Artis, Michael, mid-to-late 1940s, H. 10 1/4 x W. 6 x D. 8 in., terracotta, Purchased with funds from the National Endowment for the Arts and the North Carolina State Art Society (Robert F. Phifer Bequest)

In the course of the class, we photographed several artworks in the Museum’s permanent collection, from a small bust by William Artis to Ledelle Moe’s monumental outdoor sculpture Collapse. The technique consisted of taking “rings” of overlapping photographs around the object, at optimal distance relative to focal length, with camera lenses set at a fixed focus point and aperture. The primary objective in each case was to establish a consistent, rule-based workflow in order to reduce the measurement uncertainty of the rendered photoset, which may then be used to generate reliable 3-D data as well as be archived and used for further study by others.

At the NCMA we plan to employ the technique for such projects as monitoring the surface wear over time of our outdoor sculptures, revealing surface markings of ancient objects for insight into makers’ techniques and tools, and generating 3-D renderings of delicate artifacts that can be manipulated and viewed in virtual environments by museum visitors and scholars. Other applications will become possible as 3-D processing tools are improved.

Will Rourk of the University of Virginia and NCMA Head Photographer Karen Malinofski photograph details of Collapse.

Christopher Ciccone is a photographer at the North Carolina Museum of Art.

Filed under: Conferences, Grants, Guest Blogger, Technology | Tags: 3D data, CHI, conservation, Digital Imaging, Digital Preservation, Open Source, RTI, symposium

Our guest blogger, Emily B. Frank, is currently pursuing a Joint MS in Conservation of Historic and Artistic Works and MA in History of Art at New York University, Institute of Fine Arts. Thank you, Emily!

With the National Endowment for the Humanities (NEH) back on the chopping block in the most recent federal budget proposal, I feel particularly privileged to have taken part in the NEH-funded symposium, Illumination of Material Culture, earlier this month.

Co-hosted by The Metropolitan Museum of Art and Cultural Heritage Imaging (CHI), the symposium brought together conservators, curators, archaeologists, imaging specialists, cultural heritage and museum photographers, and the gamut of engineers to discuss and debate uses, innovations, and limitations of computational imaging tools. This interdisciplinary audience fostered an environment for collaboration and progress, and a few themes emerged.

(1) The emphasis among practitioners seems to have shifted from isolated techniques to integrating a range of data types.

E. Keats Webb, Digital Imaging Specialist at the Smithsonian’s Museum Conservation Institute, presented “Practical Applications for Integrating Spectral and 3D Imaging,” the focus of which was capturing and processing broadband 3D data. Holly Rushmeier, Professor of Computer Science at Yale University, gave a talk entitled “Analyzing and Sharing Heterogeneous Data for Cultural Heritage Sites and Objects,” which focused on CHER-Ob, an open source platform developed at Yale to enhance the analysis, integration, and sharing of textual, 2D, 3D, and scientific data. CHI’s Mark Mudge presented a technique for the integrated capture of RTI and photogrammetric data. The theme of integration propagated through the panelists’ presentations and the lightning talks, including but not limited to presentations by Kathryn Piquette, Senior Research Consultant and Imaging Specialist at University College London, on the integration of broadband multispectral and RTI data; Nathan Matsuda, PhD Candidate and National Science Foundation Graduate Fellow at Northwestern University, on work at NU-ACCESS with photometric stereo and photogrammetry; as well as a lightning talk by Chantal Stein, in collaboration with Sebastian Heath, Professor of Digital Humanities at the Institute for the Study of the Ancient World; and myself, about the integration of photogrammetry, RTI, and multiband data into a single, interactive file in Blender, a free, open source 3D graphics and animation software.

(2) There is an emerging emphasis on big data and the possibilities of machine learning.

Paul Messier, art conservator and head of the Lens Media Lab at the Institute for the Preservation of Cultural Heritage, Yale University

The notion of machine learning and the possibilities it might unlock were addressed in multiple presentations, perhaps most notably in the “RTI: Beyond Relighting,” a panel discussion moderated by Paul Messier, Head of the Lens Media Lab, Institute for the Preservation of Cultural Heritage (IPCH), Yale University. Dale Kronkright presented work in progress at the Georgia O’Keeffe Museum in collaboration with NU-ACCESS that utilizes algorithms to track change to the surfaces of paintings, focusing on the dimensional change of metal soaps. Paul Messier briefly described the work being done at Yale to explore the possibilities for machine learning to work iteratively with connoisseurs to push data-driven research forward.

(3) The development of open tools for sharing and presenting computational data via the web and social media is catching up.

Graeme Earl, Director of Enterprise and Impact (Humanities) and Professor of Digital Humanities at the University of Southampton, UK, gave a keynote entitled “Open Scholarship, RTI-style: Museological and Archaeological Potential of Open Tools, Training, and Data,” which kicked off the discussion about open tools and where the future is heading. Szymon Rusinkiewicz, Professor of Computer Science at Princeton University, presented “Modeling the Past for Scholars and the Public,” a case study of a cross-listed Archaeology-Computer Science course given at Princeton in which students generated teaching tools and web content that provided curatorial narrative for visitors to the museum. CHI’s Carla Schroer presented new tools for collecting and managing metadata for computational photography. Roberto Scopigno, Research Director of the Visual Computing Lab, Consiglio Nazionale delle Richerche (CNR), Istituto di Scienza e Tecnologie dell’Informazione (ISTI), Pisa, Italy, delivered the keynote on the second day of the symposium about 3DHOP, a new web presentation and collaboration tools for computational data.

We had the privilege of hearing from Tom Malzbender, without whose work at HP Labs in the early 2000s this symposium would never have happened.

The keynotes at the symposium were streamed through The Met’s Facebook page. The other talks were recorded and will be available in three to four weeks. Enjoy!

Filed under: Guest Blogger, Technology, Training, Workshops | Tags: 3D data, conservation, photogrammetry, Training

Lauren Fair is Associate Objects Conservator at Winterthur Museum, Garden, & Library in Buffalo, New York. She also serves as Assistant Affiliated Faculty for the Winterthur/University of Delaware Program in Art Conservation (WUDPAC). Lauren was a participant in CHI’s NEH grant-sponsored 4-day training class in photogrammetry, August 8-11, 2016 at Buffalo State College. She posted an account of her experience in the class in her own blog, “A Conservation Affair.” Here is an excerpt from her fine post.

I have discovered the perfect way to decompress after a four-day intensive seminar on 3D photogrammetry: go to your friend’s cabin on a small island in a remote part of Canada. While you take in the fresh air and quiet of nature, you can then reflect on all that you have shoved into your brain in the past week – and feel pretty good about it!

I have discovered the perfect way to decompress after a four-day intensive seminar on 3D photogrammetry: go to your friend’s cabin on a small island in a remote part of Canada. While you take in the fresh air and quiet of nature, you can then reflect on all that you have shoved into your brain in the past week – and feel pretty good about it!

The Cultural Heritage Imaging (CHI) team – Mark, Carla, and Marlin – have done it again, in that they have taken their brilliance and passion for photography, cultural heritage, and documentation technologies, and formulated a successful workshop on 3D photogrammetry that effectively passes on their expertise and “best practice” methodologies.

The course was made possible by a Preservation and Access Education and Training grant awarded to CHI by the National Endowment for the Humanities.

(Read the rest of Lauren’s blog here.)

This blog by Carla Schroer, Director at Cultural Heritage Imaging, was first posted on the blog site, Center for the Future of Museums, founded by Elizabeth Merritt as an initiative of the American Alliance of Museums.

My organization, Cultural Heritage Imaging (CHI), has been involved in the development of open source software for over a decade. We also use open source software developed by others, as well as commercial software.

What drove us to make our work open for other people to use and adapt?

We are a small, independent nonprofit with a mission to advance the state of the art of digital capture and documentation of the world’s cultural, historic, and artistic treasures. Development of technology is only one piece of our work–we are involved in many aspects of scientific imaging, including developing methodology, training museum and heritage professionals, and engaging in imaging projects and research. Because we are small, and because the best ideas and most interesting breakthroughs can happen anywhere, we collaborate with others who share our interests and who have expertise in using digital cameras to collect empirical data about the real world.

Our choice to use an open source license with the technology that is produced in this kind of collaboration serves both the organizations involved in its development and the adopters of the software. By agreeing to an open source license, the people and institutions that contribute to the software development are assured that the software will be available and that they and others can use it and modify it now and in the future. It also keeps things fair among the collaborators, ensuring that no one group exploits the joint work.

How does open source benefit its users?

It’s beneficial not just because the software is free. There is a lot of free software that is distributed only in the “executable” version without making the source code available to others to use and modify. One advantage to users who care about the longevity of their data − and, in our case, images and the results of image processing − is that the processes being applied to the images are transparent. People can figure out what is being done by looking at the source code. Also, making the source code available increases the likelihood that software to do the same kind of processing will be available in the future. It isn’t a guarantee, but it increases the chances for long-term reuse. Finally, open source benefits the community of people all working in a particular area, because other researchers, students, and community members can add features, fix errors, and customize for special uses. With a “copyleft” license, like the Gnu General Public License that we use, all modifications and additions to the software have to be released under this same license. This ensures that when others build on the software, their modifications and additions will be “open” as well, so that the whole community benefits. (This is a generalization of the terms; read more if you want to understand the details of a particular license.)

Open source is a licensing model for software, nothing more. The fact that it is “open” tells you nothing about the quality of the software, whether it will meet your needs, whether anyone is still working on it, how difficult or easy to learn and use it is, and many other questions you should think about when choosing technology. The initial cost of software is only one consideration in adopting a technology strategy. For example, what will it cost to switch to another solution, if this one no longer does what you need? Will you be left with a lot of files in a proprietary format that other software can’t open or use?

That leads me to remark on a related issue– open file formats. Whether you choose to use commercial software or open source software, you should think about how your data and resulting files will be stored and used. Almost always, you should choose open formats (like JPEG, PDF, and TIFF) because that means that any software or application is allowed to read and write to the format, which protects the reuse of the data in the long term. Proprietary formats (like camera RAW files and Adobe PSD files) may not be usable in the future. The Library of Congress Sustainability of Digital Formats web site has great information about this topic.

At Cultural Heritage Imaging, we use an open source model for development of imaging software because it helps foster collaboration. It also provides transparency as well as a higher likelihood that the technology and resulting files will be usable in the future. If you want to learn more about our approach to the longevity and reuse of imaging data, read about the Digital Lab Notebook.

Filed under: Guest Blogger, Technology, Training | Tags: 3D data, conservation, photogrammetry, Training

Our guest blogger, Emily B. Frank, is currently pursuing a Joint MS in Conservation of Historic and Artistic Works and MA in History of Art at New York University, Institute of Fine Arts. Thank you, Emily!

I’ve been following the development and improvement of photogrammetry software for the past few years. As an objects conservation student with a growing interest in the role of digital imaging tools in the study and conservation of art, I’ve always found photogrammetry of theoretical interest. In my opinion, until recently the limited accuracy of the software and tools impeded widespread applications in conservation. With recent advances, this is no longer the case; sub 1/10th mm accuracy has changed the game.

This January, the stars aligned and I was able (and lucky) to participate in CHI’s January photogrammetry training. From January 11 to 14, I lived and breathed photogrammetry in CHI’s San Francisco studio. The four-day course was co-instructed by Carla Schroer, Mark Mudge, and Marlin Lum, who each brought something extraordinary to the experience.

For those of you that don’t know them personally, I’ll provide context; these guys are total gurus. Carla brings business and tech know-how from years of work in Silicon Valley earlier in her career. She has a warm and direct teaching style that is accessible no matter what your photography/imaging background. Mark is a true visionary; he is rigorous and inventive, and always carefully pushing the brink of what’s possible. Marlin is the photographer of the group and a master at fabricating the perfect tool/workstation for the capture of near-perfect source images. His never-ending positivity is contagious. Together they are practically unstoppable. It’s obvious that they love teaching and truly believe in the power of the tools they are sharing.

The class began with a brief theoretical introduction, then dove into practical aspects of capture and processing. We swiftly covered how to approach a range of situations, what equipment to use, where to compromise, and where to stick to a specific protocol, etc. We focused on methodology, and we practiced a lot. We moved through capture and processing of increasingly complex projects, and we received detailed handouts to supplement everything we were learning. The class also afforded students the opportunity to work on a larger group project; this January we captured a 3D model of a reproduction colossal Olmec head located outdoors at City College of San Francisco. CHI focuses on repeatability and process in order to achieve a robust, reproducible result.

Setting up for image capture at the January 2016 photogrammetry class; blogger Emily Frank is far left.

An added benefit of the class was the insight gained through conversation with the other students, who included museum photographers, landscape photographers, archaeologists, classicists, 3D-imaging academics, and the founder of a virtual reality start-up. This diversity fostered a breeding ground for inventive implementation, and the inevitable collaboration left me envisioning new ways to employ photogrammetry as a tool in my work.

For those of you who have ever considered the use of photogrammetry, I would strongly encourage you to sign up for one of CHI’s upcoming trainings. I still have a lot to learn and master, but I left the training with the feeling that with practice I would be able to capture and process 3D data with the accuracy and resolution to meaningfully contribute to the academic study of works of art.

Notes from CHI:

- Emily Frank has included a link to a 3D model of a reproduction colossal Olmec head located at City College of San Francisco. This huge outdoor sculpture was a practice subject in CHI’s January photogrammetry class in which our guest blogger Emily Frank was a participant. The model was created with photogrammetry, and it is presented on Sketchfab, a website for 3D models.

- See our Flickr photo album of the January 2016 photogrammetry class in which blogger Emily Frank participated.

Filed under: Guest Blogger, On Location, Technology | Tags: Archaeology, biofilm removal, bronze, Lydia, Roman, RTI, Sardis

Our guest blogger, Emily B. Frank, is a student conservator on the Sardis Archaeological Expedition. Currently she is pursuing a Joint MS in Conservation of Historic and Artistic Works and MA in History of Art at New York University, Institute of Fine Arts. Thank you, Emily!

Sardis, the capital city of the Lydian empire in the seventh and sixth centuries BC, is often best remembered for the invention of coinage. Remains of a monumental temple of Artemis, begun during the Hellenistic period and never finished, still stand tall today. In Roman times, the city was famous as one of the Seven Churches of Asia in the Book of Revelation. In the fourth and fifth centuries AD, Sardis boasted what is still the largest known synagogue in antiquity. Sardis flourished and continued to grow in the Late Roman period until its decline by the seventh century AD.

Archaeological excavations at Sardis began over a century ago and are currently led by Dr. Nicholas D. Cahill, professor of Art History at the University of Wisconsin, Madison. The excavated material is vastly diverse and the conservation efforts there equally so. Conservation this season, under Harral DeBauche, a third-year conservation student at New York University, Institute of Fine Arts, supported active excavation across over 1,000 years of antiquity and addressed a number of site preservation issues. RTI greatly benefited the conservators and archaeologists in a couple of significant ways.

Significant finds with extremely shallow incised designs/inscriptions and impressions were made legible with RTI. RTI was helpful in understanding a bronze triangle recovered from the corridor of a Late Roman house (Fig. 1).

The triangle is incised with three images of a female deity and a border of magical signs (Fig. 2). Its use is likely connected with religious ritual practice in Asia Minor between the third and sixth centuries AD.

The legibility of the inscriptions, aided by RTI, reinforced the connection between the triangle’s inscriptions and material and written sources. Two comparenda for the triangle, one from Pergamon and one from Apamea, were identified, and the magic symbols on the triangle were connected with rituals described in a the Greek Magical Papyri. RTI also aided in decoding and documenting a lead curse tablet and in understanding the weave structure of bitumen basketry impressions.

Additionally, a multi-year biofilm removal project of the Artemis Temple at Sardis is currently underway, headed by Michael Morris and Hiroko Kariya, conservators in private practice. The removal of this biofilm is carried out by a six-day process. RTI was used experimentally to document the changes to the stone throughout the removal process (Fig. 3).

RTIs were taken before treatment, during treatment (day 3), and after treatment (day 7). Because the biocide continues to work for months after its application, a final RTI will be taken next summer. Initial comparison of the images showed no loss to the stone surface as a result of the biofilm removal process. All very exciting!

To find out more about the excavations at Sardis, see http://sardisexpedition.org/.

Filed under: Commentary, Guest Blogger | Tags: Digital Imaging, Digital Preservation, Preservation, threats to heritage, world history

Our guest blogger, Matt Hinson, is a junior at the School of Foreign Service at Georgetown University in Washington, D.C. He spent the summer as an intern at CHI. Thanks, Matt!

During my summer internship at Cultural Heritage Imaging (CHI), I learned a great deal about the danger facing many of the world’s treasures as well as the efforts to save them. My background in international history leads me to believe that international law and policy can help to address many of the dangers. I have also learned how CHI is contributing to the revolution in how we interact with information and deal with these cultural heritage threats.

The variety of projects that CHI undertakes demonstrates the wide range of threats facing cultural heritage. Although some of CHI’s most recent projects have dealt with weathering and natural deterioration, it is important to understand the other risks to humanity’s greatest treasures. One can categorize tangible cultural heritage sites into natural formations, historic structures, including cities and sculptures, and inscriptions. Complex rock formations, the cities built by ancient cultures, works of art, and monuments are all included. It is also important to recognize cultural heritage sites that hold symbolic value to a specific community or group. Many of these are protected by national parks and museums, but only to a certain extent. Multiple forces continue to threaten this material.

Threats can be divided into man-made and natural. The man-made category encompasses destruction from conflict, construction, and development. Human neglect can also be included as a potential danger to the survival of important sites. War has become one of the most widely observed man-made threats to cultural heritage, particularly in ongoing conflicts in Africa and the Middle East. Groups fighting for ideological reasons, such as religious fundamentalists, have attempted to destroy artifacts that contradict their beliefs. The recent destruction of the ancient city of Nimrud by the Islamic State is one prominent example. Conflict areas tend to encourage looting of historic sites, leading to sales of antiquities on the black market. Often the lure of financial gain from the development of sites that contain important heritage outweighs the cultural value that is at risk. One example is the impending destruction of an ancient Turkish cave city after the completion of a dam that will put it under water.

In most parts of the world, environmental threats to cultural heritage are more prevalent than man-made ones. For monuments, statues, and other structures made of stone, weathering is a common means of loss. Precipitation, especially acid rain, and other kinds of exposure to water can lead to the gradual erosion of stone, rotting of wood, and general deterioration of sites and monuments.

National Park Service (NPS) map of the 105 US parks vulnerable to sea level rise. From “Strategies for Coastal Park Adaptation to Climate Change”: http://marineprotectedareas.noaa.gov/pdf/helpful-resources/webinar_beavers_schupp_nps_coastalcasestudies_111413.pdf

Environmental damage caused by climate change is accelerating the destruction. Rising sea levels are predicted to have a particularly devastating impact on many cultural sites. A recent study shows that predicted sea level rise over the next years will put 80% of Icelandic cultural sites at risk. According to the National Park Service (NPS), natural heritage sites in the US are also at risk. The NPS reports 105 parks as “vulnerable to sea level rise.” The effect on weather patterns due to climate change, particularly the increase in severe weather events, could pose major threats to cultural heritage sites beyond normal historical weathering.

How can these various threats be addressed? Archaeological preservation is one common method of physically excavating and preserving important historical artifacts. Moving objects to museums has successfully preserved different forms of cultural heritage throughout time. Digital imaging, practiced here at CHI, is a powerful tool to be used alongside archaeological methods to provide additional, more detailed information for the historical record. Digital representations of cultural heritage sites can be used to monitor the rates of change at these sites as well as preserve their shape and cultural significance. The re-creation of cultural heritage with digital methods also impacts how information is shared: an artifact that may be thousands of miles away can be viewed by anyone anywhere in an accurate digital form. This also allows objects and sites to stay in their original locations while providing access to their digital representations.

From my perspective, a crucial element in furthering the protection of cultural heritage is the legal and political protection of these sites. International law has developed to protect the things that have been identified as most sacred to communities around the world, including human dignity, life, and freedoms. Cultural heritage must be worthy of the same kind of protection every day as well as in wartime. Although some institutions already exist to defend cultural heritage, UNESCO, for example, I believe we must govern the protection of artifacts and sites with laws just as we do in cases of war crimes and sovereignty.

Another lesson I take away from my time at CHI concerns the changes in our interactions with information and knowledge. I am familiar with the usual media in our repositories of knowledge: text, photographs, video, and audio. While these media convey interpretations and descriptions of their subject matter, they rarely can stand as accurate representations of sites and objects. The potential that three-dimensional imaging brings to human interaction with information can be enormous. Many historical and cultural wonders previously limited to a particular geographical area could be made accessible to others, leading to progress in historical and interpretive research. Expanding research on these 3D methods, such as the ongoing work at CHI, can lead to highly effective ways of contributing to this revolution in information.

The Sennedjem Lintel from the Phoebe A. Hearst Museum of Anthropology at the University of California, Berkeley. Lower left left shows the surface color and shape. Upper right shows an enhanced surface shape.

CHI has exposed me to a lot of these problems facing world heritage sites but has also introduced me to the preservation and information successes that are possible with different methods and technologies.

Filed under: Guest Blogger, On Location | Tags: Archaeology, knapped tools, lithics, RTI

This is the second post by our guest blogger Dr. Leszek Pawlowicz, an Associate Practitioner in the Department of Anthropology, Northern Arizona University, Flagstaff, AZ, USA. He can be contacted at leszek.pawlowicz@nau.edu. Thank you again, Leszek! Even in the age of digital photography, archaeology still relies heavily on old-school hand-drawn illustrations for documenting artifacts, particularly for publication. It can be difficult, or even impossible, to get a single photograph that shows all of the artifact’s key details. In my area of interest, knapped stone tools, low relief in small-scale surface topography, high relief in large-scale topography, specular (reflective) materials, variations in material color and contrast across the surface, all conspire to make these artifacts difficult to photograph. A skilled illustrator is capable of creating a drawing that reveals far more detail than even a good standard photograph (Figure 1). But creating such a drawing requires experience, time, and money, often making it impractical.

Figure 1: Comparison of digital photograph and line drawing of knapped stone tool. Courtesy Lance Trask, http://lktrask-media.com/.

Reflectance Transformation Imaging (RTI) is a great way to document and image knapped stone tools. The ability to interactively modify the lighting angle, as well as mathematically manipulate the perceived interaction of light and surface, allows the viewer to see details difficult to photograph. It’s almost the equivalent of having the object in your hands, and turning it at various angles to the light to reveal details of its structure and manufacture. However, one drawback is that you can’t embed an RTI view in a paper or publication unless it’s in electronic format. Also, unlike a drawing that can show all the critical details at once, you may have to move the virtual lighting angle in many different directions to reveal all details. Using data from the custom RTI systems I described in a previous post, I’ve been working on ways to create static images of knapped stone tools with detail comparable to that in a line drawing.

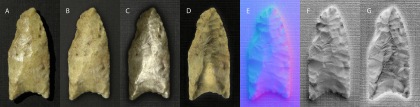

Figure 2: Modern obsidian knapped point. A – Original digital photograph; B – Static RTI view; C- RTI Specular mode; D – RTI Static Multilight mode; E – RTI Normals mode; F – Enhanced RTI Normals mode. Click on image for enlarged view.

Figure 2 shows a series of images of a modern knapped projectile point fashioned from specular black obsidian, a particularly difficult material to photograph because of its shininess and lack of contrast. 2-A is an original digital photograph, with the lighting coming from the standard upper left direction; while a fair amount of detail is visible, shiny highlights obscure some details, and the overall convex shape of the point interferes with lighting on the right side of the point. 2-B shows an RTI view with lighting from the upper left; while glossy highlights have been eliminated, and more detail is visible on the right, other details are somewhat more subdued in this static view. Figure 2-C uses the RTI specular viewing mode, which imparts an artificially shiny character to the surface. This is actually a superior result for this mode on knapped stone tools – most of the time, it doesn’t look nearly this good (as you’ll see shortly). But this image suffers from lack of detail in some areas, probably because of the artifact’s overall convex surface shape. Figure 2-D uses the RTI Static Multilight mode, where the RTI data is analyzed to determine the optimal blend of multiple light angles to reveal details. Once again, this is a better result than I usually get for knapped stone tools, but some details are still hard to see because of lack of contrast in many areas. Overall, I have found that the recently added RTI Normals viewing mode leads to the best results. This mode color-codes the surface based on the perpendicular direction at every point in the image. Figure 2-E was generated using the Normals mode and a second-order HSH RTI file (standard Polynomial Texture Mapping or PTM) files produce markedly poorer results). Even in the raw colored state, the amount of detail visible across the entire surface is vastly superior to the other views. Using standard image enhancement techniques, one can further process this image to generate an extremely detailed view of the artifact’s surface details (Figure 2-F). While this modern artifact was made of a difficult material, the freshness of manufacture, lack of wear, and non-exposure to the elements make the surface features quite sharp and easy to see. What about an actual prehistoric point with a real history?

Figure 3: Molina Spring Clovis point. A – Original digital photograph; B – Static RTI view; C- RTI Specular mode; D – RTI Static Multilight mode; E – RTI Normals mode; F – Enhanced RTI Normals mode; G – Slope-shaded mode. Click on image for enlarged view.

Figure 3 shows a series of similar photographs for the Molina Spring Clovis point, collected about 10 years ago in the Apache-Sitgreaves National Forest. Clovis points are approximately 13,500 years old; this one in particular has seen a lot of wear on the flake scars, making them difficult to make out. What’s more, the point’s material (chert) is slightly glossy and very light in color, minimizing contrast in surface details. Figures 3-A through 3-F show the same image processing sequence as Figures 2-A through 2-F; note in particular that the Specular and Static Multilight modes (3-C and 3-D) do not produce especially useful images for this point. The raw Normals image (3-E) brings up details difficult to impossible to spot in the previous images, and enhancing the Normals image (3-F) makes those details even easier to see. For Figure 3-G, a Matlab script was used generate a modified view based on slope; this brings out certain details not immediately visible in the original normals image. In my opinion, images like 3-F and 3-G are superior to standard digital photographs of knapped stone tools and could well be used instead of line drawings for publication purposes. Even in cases where line drawings are preferred, these images could provide a useful background basis for tracing artifact details, instead of drawing them freehand. Beyond static images, the normals data can be used to generate a full 3D representation of the artifact’s surface. Some examples of this are visible at my website, along with downloadable 3D files and RTI data files for several projectile points. The 3D surfaces aren’t yet fully accurate, as they can be affected by inaccuracies in the normals calculation, and error accumulations in the surface fitting. However, these results are already significantly improved from my initial efforts, and I will be working on improving them further. Even in the current form, I believe that it should be possible to extract usable information than can be used to quantitatively characterize knapped stone tools.

Filed under: Equipment, Guest Blogger, Lighting, Technology | Tags: capture, guest blogger, paste print, Reflectance transformation imaging (RTI), RTIViewer, specular enhancement

Our guest blogger is Dr. Lothar Schmitt, a post-doc in the Digital Humanities Lab at University of Basel in Switzerland. Thank you, Lothar!

For some people early prints are a boring topic, but a few specialists appreciate these crude woodcuts and engravings with their stiffly rendered religious subjects. There are reasons for this unusual predilection: Beginning in about 1400, prints became an increasingly important means to make images affordable for the general public. In addition, printing images stimulated the development of several technical innovations. Among these are ways to reproduce three-dimensional surfaces and to imitate the appearance of precious materials like gold reliefs or brocade textiles.

One such technique is called “paste print.”

With only about 200 examples existing worldwide, this kind of print is rare. It consists of a layer of a slowly hardening oil-based material (Fig. 1, No. 3) that was covered with a tin foil and brushed with a yellowish glaze in order to look like leaf gold (Fig. 1, No. 4). All these layers were stuck to a sheet of paper (Fig. 1, No. 1). To produce an image, the surface of an engraved metal plate was coated with printing ink and pressed into the paste. Through this process, the printing ink was transferred as a dark background (Fig. 1, No. 5), while the cut image of the metal plate generated a relief of golden contours and hatchings. Since these layers became brittle over time, most paste prints are heavily damaged (Fig. 1, No. 2). Moreover, the subjects they show are sometimes hard to decipher.

Traditional photographs are not well suited to reproduce paste prints because it is impossible to record the interaction between the light and the barely discernible relief of the print’s surface with one single capture. To document such effects, our team, a Swiss National Science Foundation (SNSF) research group of four people at the Digital Humanities Lab in Basel, Switzerland, made the decision to try Reflectance Transformation Imaging (RTI). The benefits of RTI are ideal for revealing the material properties of the prints. However, since RTI is not able to properly reproduce the gloss of a metal surface, we were unsure about the results. The first test was very promising.

We traveled from Basel to nearby Zürich, where there is a paste print of an unidentified saint glued into a manuscript at the Zentralbibliothek Zürich (B 245, fol. 6r). The library staff, among them Rainer Walter and Henrik Rörig, were very helpful. Peter Moerkerk, head of the digitization center, even made a high-resolution scan of this print that we could use as a reference image (Fig. 2).

Fig. 2: High-resolution scan of a paste print of an unidentified saint from manuscript B 245 in the Zentralbibliothek Zürich.

For capturing RTIs we constructed a Styrofoam hemisphere with a diameter of 80 cm. On the inside of the hemisphere, there are 58 evenly distributed LEDs that can be triggered in succession. The LEDs are synchronized via a simple control unit that is connected with the flash sync port of the camera. The control unit coordinates with the interval mode of the camera in order to capture a sequence of images automatically. The resulting RTI file shows the subtle surface texture and is instrumental for comprehending the relief and the layered structure of the print (Fig. 3).

As we pointed out earlier, the glossy effects of the golden parts appear too dull, but the “specular enhancement” feature of the RTIViewer helps to distinguish between the surface conditions of the different materials that were employed to make the print.

RTIs of two other paste prints in Switzerland and several others in German collections will be captured in 2015 and 2016. If you are interested in our proceedings, please see our web site: http://dhlab.unibas.ch/?research/digital-materiality.html

Filed under: Guest Blogger, Internship | Tags: cultural heritage imaging, digital humanities, history, scientific documentation

Our guest blogger, Matt Hinson, is a junior at the School of Foreign Service at Georgetown University in Washington, D.C. Welcome to CHI, Matt!

As a student majoring in International History, I’m interested in learning methods of interpreting history through the analysis of cultural heritage. Yet I’ve observed it is sometimes difficult to understand how to apply the knowledge I’ve gained through academics. As I learn more about the current threats to major historical sites and landmarks, I have come to realize that the documentation of world heritage sites is a major component of the effort to save them. And working to save the world’s historic and cultural treasures is a valuable application of historical research skills. So when I stumbled upon Cultural Heritage Imaging (CHI), an organization on the forefront of the scientific documentation of cultural treasures, I applied for a summer internship, and here I am.

As someone who would like to further pursue academic research in history, I have realized that learning how to study physical historical artifacts can add to my existing knowledge of how to analyze historical texts. Being introduced to the imaging methods that are featured here at CHI will improve my overall research skills in preparation for future projects. The emerging field of digital humanities is another area in which CHI heavily contributes. As the humanities become more and more connected with technology, I believe it is important that students of the humanities become better acquainted with such technologies. Learning about RTI, photogrammetry, and the other digital imaging methods developed at CHI is a great way to see how the field of the humanities can be transformed by such techniques.

This summer, I hope not only to learn from CHI but also to be useful to the organization in advancing its goals. CHI is working on a number of extremely interesting projects, and I would like to contribute to them as much as I can. I am not a technical expert when it comes to 3D imaging, so I intend to concentrate on expanding the cultural and historical dimensions of CHI’s work in world heritage preservation. I am very much looking forward to my time here at CHI!